Outrageous Predictions

Révolution Verte en Suisse : un projet de CHF 30 milliards d’ici 2050

Katrin Wagner

Head of Investment Content Switzerland

Chief Investment Strategist

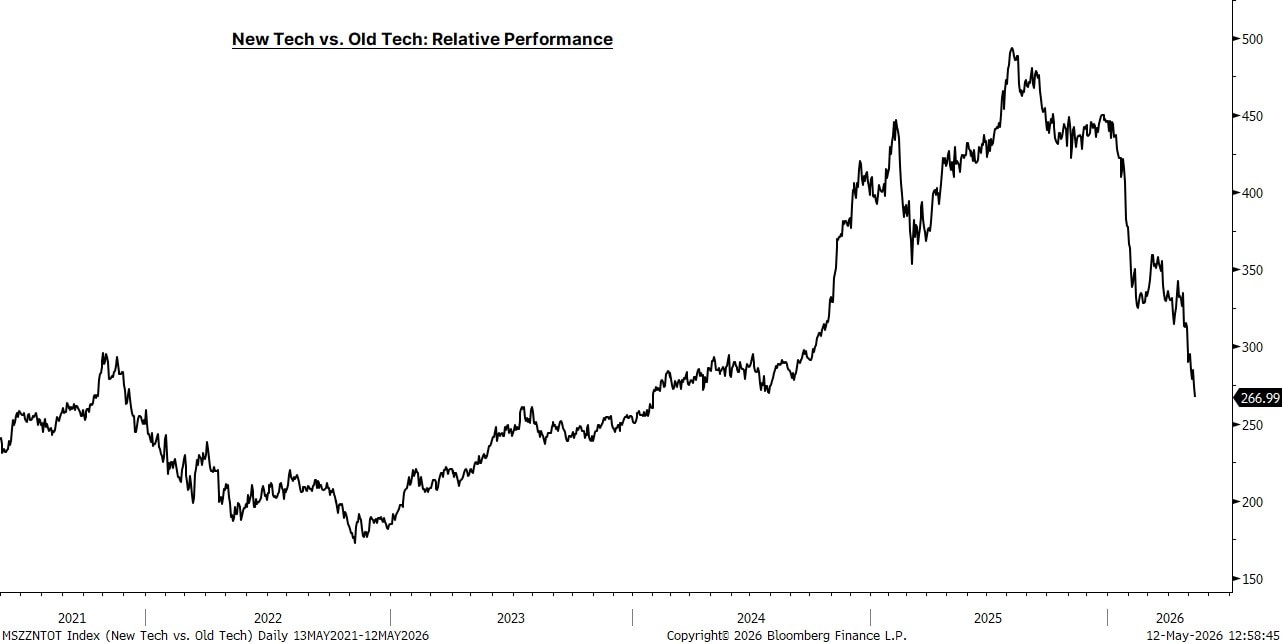

AI is reshaping the technology landscape beyond the most visible GPU winners.

The next phase is less about training models alone and more about running AI continuously across search, coding, customer service, enterprise workflows and agents.

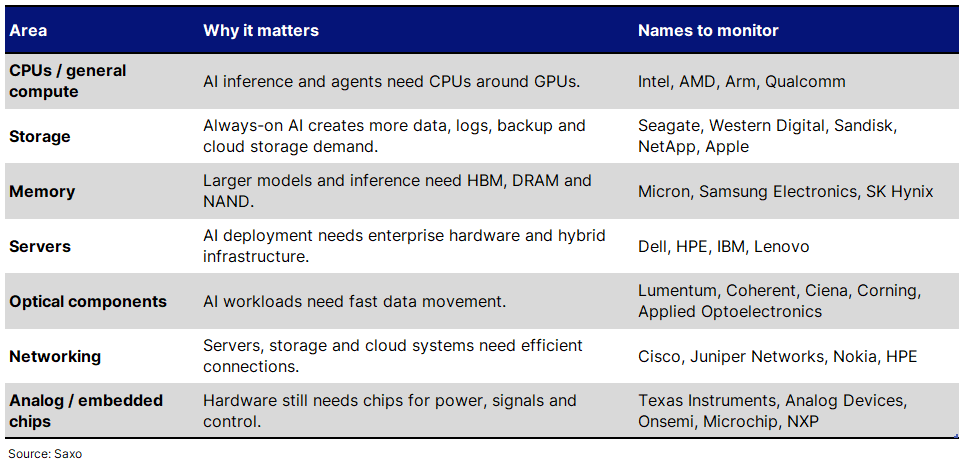

That shift is bringing older technology categories back into focus: CPUs, memory, storage, servers, networking, optical components and analog chips.

For investors, the question is not only “who makes the AI chip?” but also “who supports the full AI operating system around it?”

The risks are still important: many of these are cyclical hardware markets, and some stocks may already reflect high AI expectations.

The first phase of the AI trade was dominated by GPUs. That made sense. Training large AI models requires huge amounts of parallel processing power, and GPUs became the most visible bottleneck.

But AI is now moving from training to deployment. It is being embedded into daily workflows: search, software development, customer service, productivity tools, finance, healthcare, industrial systems and AI agents. That means AI needs to be available all the time, process more queries, store more data, retrieve more context and move information quickly between systems. The hardware requirement therefore expands beyond the accelerator itself into more compute capacity, memory, storage space, servers and connectivity.

That changes what matters in technology. AI does not run on GPUs alone. It needs a full hardware and data stack around it:

GPUs to accelerate AI workloads.

CPUs to coordinate tasks and manage system-level work.

Memory to keep data close to compute.

Storage to hold the data, logs and history.

Servers to package and deploy the hardware.

Networking and optical links to move data between systems.

Analog and embedded chips to manage power, signals and control functions.

This is how AI is changing the tech landscape. The market is starting to look beyond the headline AI winners and into the older hardware categories that make AI usable at scale.

GPUs are critical for AI acceleration, but CPUs still handle much of the system-level work around AI. They help with data loading, scheduling, input/output, databases, retrieval systems, orchestration and multi-step workflows.

This becomes more important as AI shifts from model training to always-on usage. A chatbot, coding assistant or AI agent has to retrieve information, check context, call tools, manage memory and respond repeatedly. That creates more demand for general-purpose compute around the GPU layer.

Companies to monitor: Intel, AMD, Arm.

AI creates and reuses large amounts of data. Training datasets, inference logs, enterprise data lakes, vector databases, customer records, search indexes, model outputs and compliance archives all need to be stored and retrieved.

This brings hard drives, flash storage and enterprise storage back into focus. Hard drives still matter for large-scale cloud storage, while flash storage is important where speed and latency matter.

There is also a consumer-device angle. If more AI features run locally on PCs, compact desktops and workstations, memory and storage intensity can rise outside the data centre too. Apple’s Mac mini and Mac Studio availability issues are a useful signal of how broader memory constraints can spill into consumer hardware. Apple is not a pure storage play, but the signal matters.

Companies to monitor: Seagate, Western Digital, Sandisk, NetApp, Apple.

Memory is becoming one of the clearest beneficiaries of AI hardware demand. Larger models, longer context windows and persistent AI workflows require more high-bandwidth memory, DRAM and NAND.

High-bandwidth memory has already become a key bottleneck for AI accelerators. But broader memory demand also matters as AI inference scales and more applications become AI-enabled.

Companies to monitor: Micron, Samsung Electronics, SK Hynix.

AI does not eliminate traditional servers. It can increase the need for them.

Specialised AI servers are required for GPU-heavy workloads, but enterprises also need servers for databases, storage management, inference support, hybrid infrastructure and general workloads around AI deployment.

This is why traditional enterprise hardware providers are back on investor watchlists. The question is whether AI creates a durable server refresh cycle, not just a one-off demand spike.

Companies to monitor: Dell Technologies, Hewlett Packard Enterprise, IBM, Lenovo.

As AI workloads scale, data has to move quickly between servers, storage systems and compute clusters. This supports demand for optical components, fibre, switches, routers and high-speed networking.

Optical links are particularly important because AI workloads require fast, low-latency data movement. Networking companies also matter because AI deployment requires efficient connections across enterprise systems, data centres and cloud infrastructure.

Companies to monitor: Lumentum, Coherent, Ciena, Corning, Applied Optoelectronics, Cisco, Juniper Networks, Nokia, HPE.

AI hardware still needs chips that manage power, signals and control functions. This is where analog and embedded semiconductor companies become relevant.

These are not the most visible AI names, but they sit inside servers, industrial systems, data-centre hardware, autos and connected devices. As AI spreads into more physical systems, the need for power management, sensors, signal conversion and embedded control can rise.

Companies to monitor: Texas Instruments, Analog Devices, Onsemi, Microchip Technology, NXP.

This is not a recommendation list. It is a framework for monitoring where AI demand may broaden into older technology categories.

The key risk is that investors take a real theme and stretch it too far.

AI may support demand for CPUs, storage, memory, servers, optical components and networking, but many of these are still cyclical hardware markets. Demand can be lumpy, supply can catch up, pricing can reverse and customers can pause spending if AI monetisation disappoints.

Key risks include:

Slower AI capex: hyperscalers may reduce or delay spending after a heavy investment cycle.

Delayed enterprise adoption: companies may take longer to deploy AI at scale than markets expect.

Supply response: memory, storage or server supply could catch up, reducing pricing power.

Margin pressure: revenue growth may not translate into stronger profits if competition or input costs rise.

Technology substitution: custom chips or specialised architectures could reduce demand for some traditional components.

Valuation risk: many stocks linked to AI hardware have already moved sharply.

AI is changing the tech landscape by expanding the opportunity beyond GPUs. As AI moves from training to everyday deployment, the demand base may broaden into CPUs, storage, memory, servers, optical components, networking and analog chips.

For investors, this is a more practical way to think about the next phase of AI: not only which companies build the AI models, but which companies support the hardware and data stack that keeps AI running.

Gold fell again in May as Middle East crisis reshaped market focus