Outrageous Predictions

Révolution Verte en Suisse : un projet de CHF 30 milliards d’ici 2050

Katrin Wagner

Head of Investment Content Switzerland

Chief Investment Strategist

Mag 7 earnings confirmed that AI demand is still scaling, with stronger cloud growth and higher capex guidance — but stock reactions showed investors are now pricing the quality and payback of AI spend, not just the size of the budget.

Market reactions were nuanced, and the AI story is now more selective: investors rewarded clearer revenue flow-through and questioned capex without near-term offsets.

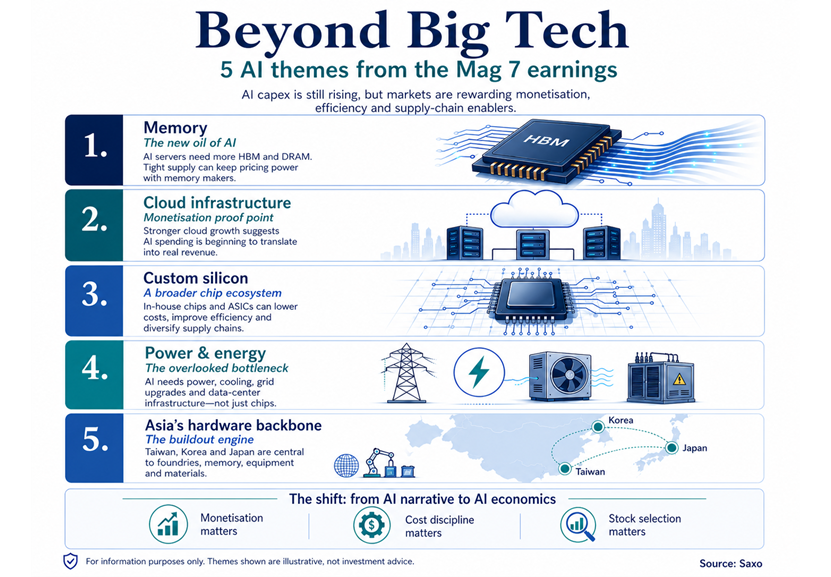

Five themes investors may monitor stand out from the earnings cycle: memory, cloud infrastructure, custom silicon, power and energy, and Asia’s hardware backbone.

The latest round of Mag 7 earnings was strong on the surface — solid revenue growth, improving cloud trends and continued AI momentum — but the details matter more this time. Crucially, capex guidance was revised higher across hyperscalers, with combined 2026 spend now tracking around $725bn (vs. ~$670bn pre-earnings) and Meta lifting its range to $125–145bn. Alphabet and Microsoft also flagged capacity constraints, signalling demand is running ahead of supply.

The first-order implication is clear: the AI buildout is intact and still scaling, which keeps the broader AI value chain — from semiconductors and memory to networking, power and data centres — in focus.

But the second-order shift is just as important: not all stocks benefited.

Market reactions diverged sharply as investors differentiated between:

In other words, the market is no longer treating “AI capex = buy”. The story has become more nuanced and more selective.

Adding to this, inflation risks are re-emerging — particularly through energy and component costs. As input costs rise alongside capex, the bar for monetisation becomes higher, not lower.

Taken together, this marks a shift from AI enthusiasm to AI economics: the theme remains strong, but execution, cost discipline and positioning across the value chain matter more.

Against this backdrop, we highlight five market themes investors may monitor as the AI cycle evolves.

Memory has become one of the clearest bottlenecks in the AI buildout. AI servers need significantly more high-bandwidth memory than traditional servers, and industry estimates point to a sharp rise in DRAM pricing into 2026. This is already visible in hyperscaler commentary: Meta and Microsoft both pointed to higher component pricing as one reason capex is moving higher, but neither signalled a pullback in AI investment.

For investors, this suggests the AI cost inflation problem may also be a revenue opportunity for memory suppliers. If hyperscalers continue absorbing higher memory costs to secure capacity, pricing power may stay with HBM, DRAM and NAND producers for longer.

Illustrative names investors often monitor in this area include SK Hynix, Micron, Samsung Electronics and memory-linked ETFs, which can be explored through Saxo’s Memory Stocks & ETFs shortlist. These names are included only as examples for reference and are not recommendations.

The key risk is that memory remains cyclical. If new supply comes online faster than expected, or if AI capex growth slows, pricing power could fade quickly.

Cloud was the most important evidence that AI is moving from spending promise to revenue reality. Alphabet’s Google Cloud grew roughly 63% YoY, driven by enterprise AI demand, while Amazon’s AWS rose about 28% YoY, its fastest pace in several quarters, with strong operating income. Microsoft’s Azure grew around 39–40% YoY, reflecting continued demand for AI-linked enterprise software and cloud services.

For investors, this matters because cloud is where AI capex begins to show commercial payback. If AI workloads keep moving into production, the beneficiaries are not just the cloud platforms but also the suppliers behind them: networking, data centre infrastructure, custom silicon, cooling and power management.

Illustrative names investors often monitor include Arista Networks for cloud networking, Broadcom and Marvell for custom silicon, and Vertiv and Eaton for data centre power and cooling. These examples are for reference only and not recommendations. Saxo’s AI Value Chain shortlist can be used as a broader reference point for investors looking beyond the largest tech platforms.

It also opens an emerging angle around AI-focused “neoclouds” — specialist cloud providers that rent GPU and AI compute capacity to companies that need access faster than hyperscalers can provide. Examples often discussed in this space include CoreWeave, Nebius and Applied Digital. These are illustrative only and not recommendations, and the segment can be more volatile and execution-sensitive.

The key risk is that cloud revenue growth may still not fully offset the scale of infrastructure spending. Margins could remain under pressure if AI usage grows but pricing, utilisation or enterprise adoption disappoints.

The hyperscalers are increasingly trying to control more of their AI stack, including chip design. Google’s TPUs, Amazon’s Trainium and Meta’s MTIA programme all point to the same strategic goal: lower unit costs, improve performance for specific workloads and reduce dependence on a narrow external supplier base. This is especially relevant for inference, where efficiency and scale matter as AI usage expands.

For investors, this does not necessarily mean the GPU leaders lose relevance overnight. Nvidia and AMD remain central to the AI buildout, but custom silicon could gradually change where future profit pools sit, especially in inference. ASIC designers, advanced packaging, foundries and semiconductor equipment suppliers may gain importance as hyperscalers diversify their hardware roadmaps.

Illustrative names investors often monitor include Broadcom and Marvell for custom ASICs, TSMC for foundry exposure, ASML for advanced equipment and Amkor or ASE Technology for packaging. These names are examples for reference only and are not recommendations. This also reinforces Asia’s central role in the AI supply chain, particularly across Taiwan, Korea and Japan, without needing to treat it as a separate theme.

The key risk is execution. Custom silicon is difficult to scale, and incumbent GPU providers still have deep software ecosystems, customer relationships and performance advantages. The near-term risk for Nvidia and AMD is more about valuation expectations and future share of inference workloads than an immediate collapse in demand.

AI is not only compute-intensive; it is power-intensive. As hyperscalers raise capex, the limiting factor is increasingly not just chips, but whether enough reliable power, grid capacity and cooling infrastructure can be secured to run the data centres. This is becoming even more important as energy costs rise and inflation risks return to the macro conversation.

For investors, this makes power one of the most important second-order AI themes. The AI buildout can support demand for utilities, nuclear and gas power providers, grid equipment, electrical systems, cooling solutions and data centre REITs.

Illustrative names investors often monitor include Constellation Energy and Vistra for power generation, NextEra Energy for renewables and grid-linked exposure, Bloom Energy and GE Vernova for distributed generation and grid/energy solutions, Eaton and Vertiv for electrical systems and cooling, and Equinix and Digital Realty for data centre real estate. These names are examples for reference only and are not recommendations. They are not always viewed as “AI stocks”, but they may be critical enablers of AI capacity growth.

The key risk is that power is also where policy, permitting and financing risks are most visible. Delays in grid upgrades, higher electricity prices or more expensive debt funding could slow data centre deployment and make AI economics harder to justify.

The earnings cycle also highlights that while software and AI adoption are still evolving, the hardware backbone is already in full buildout, and that backbone is largely in Asia. As capex scales and bottlenecks emerge, value is concentrating in the parts of the supply chain that can deliver physical capacity: advanced semiconductors, memory, equipment and manufacturing.

For investors, this shifts focus toward Asia’s role as the production engine of AI. Taiwan’s foundry ecosystem, Korea’s dominance in memory, and Japan’s strength in semiconductor equipment and materials are central to enabling the current phase of the AI cycle. These are not dependent on near-term AI monetisation. They benefit directly from the buildout itself.

The key risk is that this hardware-led advantage also comes with geopolitical exposure. Supply chain concentration, export controls and Taiwan-related risks mean Asia may remain structurally critical, but also more sensitive to policy and geopolitical shifts.

The AI story has not weakened, it has matured.

The market is shifting from rewarding participation to rewarding execution. That means:

For investors, this marks a move from a single-theme rally to a more nuanced cycle — where identifying the right part of the value chain may matter as much as identifying the theme itself.

Gold: Near-term headwinds meet longer-term structural support